The Quiet Web is that part on the Internet that is public but undiscoverable. We hear many terms including those that geeks talk about like the Dark Web, the Deep Web or even the Surface Web but what we do not associate are those sites we think we know are out there, but we just cannot locate to browse them unless you know the exact URL.

In my O Scale adventures, I run across some of these excellent quiet sites by accident. And perhaps the only way to remember they exist is to bookmark them for future reference. But why should I care? Usually, I am hunting for information to enlighten myself in pursuit of my own model building craft. Whether it is part of learning or simply to enjoy work by other model builders, it is one aspect of creating models whereby the Internet makes us more productive from shared information.

We are not going to get deep into definitions here because one can explore that independently. But we will refine what the quiet web really is.

The general term most often used “the deep web”, but in the specific sense one means—public-facing sites that aren’t indexed or are very hard to discover despite being on the open internet—the more precise concepts are:

- The deep web (broad category)

- “Unindexed pages” (the precise SEO term)

- “Static websites” (related but not the same)

The deep web is the umbrella term for anything not indexed by search engines, even if it’s completely benign and publicly accessible. Examples include:

- Old static sites no longer linked anywhere

- Personal blogs with no inbound links

- Pages blocked from indexing with

robots.txtornoindex - Orphaned pages on otherwise normal websites

Unindexed pages, SEO literature calls these non-indexed pages or unindexed content. They are:

- Fully accessible via a normal browser

- Hosted on the public internet

- Not included in search engine results because they were never crawled, were blocked, or have no links pointing to them

Static sites are often simple HTML pages that rarely change. They tend to become unindexed over time if no one links to them, but “static” refers to how they’re built, not how discoverable they are.

Why do these sites become hard to find?

A normal website becomes effectively invisible when:

- No other site links to it (no backlinks)

- It’s old and abandoned

- It uses

noindexor blocks crawlers - It’s on a small host with low crawl priority

- It’s static and never updated

These terms the “quiet web” or “the unindexed web” describe public, non-dark, non-deep-in-the-secretive-sense pages that simply are not discoverable.

Now I realize this blog article is pretty dry reading. However, in order to become proficient in discovering content that matters to our hobby, we need to have this background some of which is ancient web technology. We know the way search engines return results is via bots that create the indexes on html web pages. The big grip of course, the popular search engines return a bunch of not so useful junk a good amount of the time.

We also know that there is more content then just html pages. There are forums, old phpBB or bulletin boards, images, pdf files to name a few. The bots can index the link to the pdf file but it knows nothing about the content. All this is changing and rapidly to our benefit.

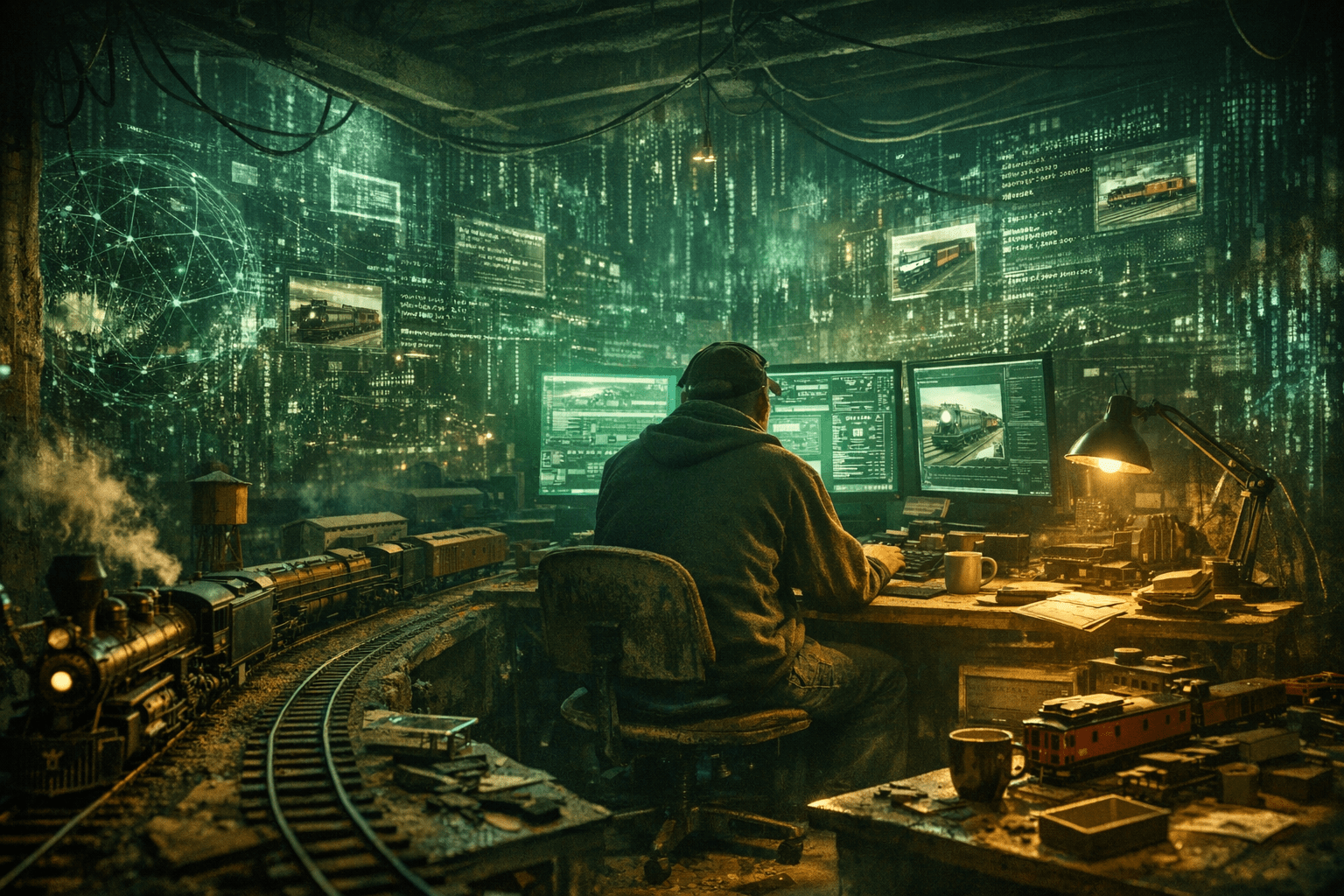

Thanks to some of the Artificial Intelligence tools, we can more directly discover and access the information from the quiet web. In the next blog article, we will be taking an extremely deep dive to demonstrate how analytics brings to light what was previously unknown and answers a line of questioning that ultimately is a great vindication of Jim Kellow, MMR New Tracks Modeling work. I promise you, it will open up a whole new world, at least it did for me.

Read the follow-up Blog at this link: The Quiet Web & The TAMR Production Culture